Using review apps in the development of GitLab

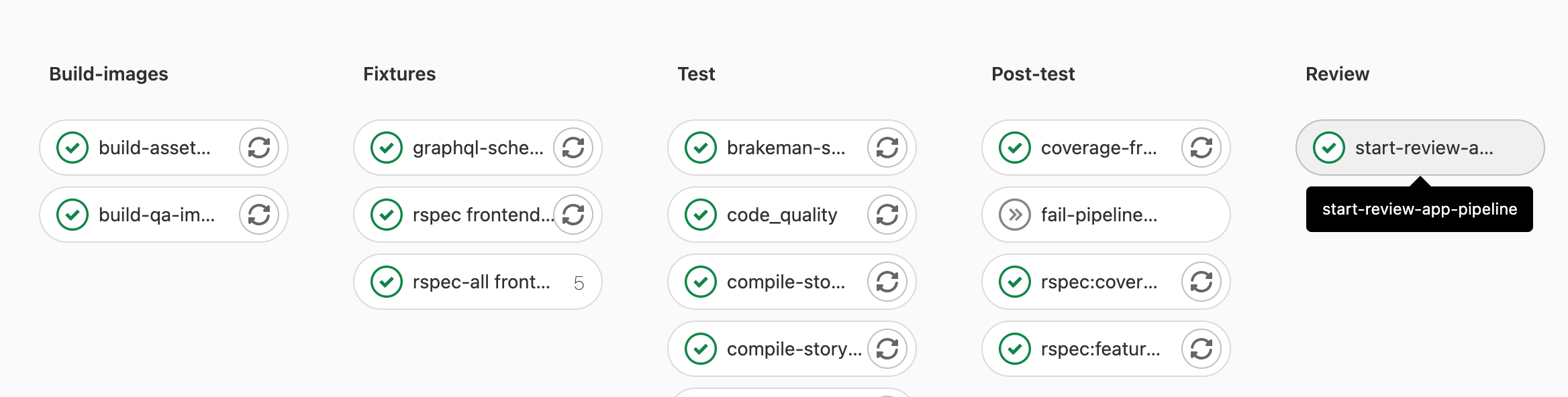

Review apps are deployed using the start-review-app-pipeline job which triggers a child pipeline containing a series of jobs to perform the various tasks needed to deploy a review app.

For any of the following scenarios, the start-review-app-pipeline job would be automatically started (only when the merge request is approved):

- for merge requests with CI configuration changes

- for merge requests with frontend changes

- for merge requests with changes to

{,ee/,jh/}{app/controllers}/**/* - for merge requests with changes to

{,ee/,jh/}{app/models}/**/* - for merge requests with changes to

{,ee/,jh/}lib/{,ee/,jh/}gitlab/**/* - for merge requests with QA changes

- for scheduled pipelines

- the MR has the

pipeline:run-review-applabel set

E2E test runs on review apps

On every pipeline in the qa stage (which comes after the review stage), the review-qa-smoke and review-qa-blocking jobs are automatically started.

qa stage consists of following jobs:

-

review-qa-smoke: small and fast subset of tests to validate core functionality of GitLab. -

review-qa-blocking: subset of tests that block the merge request. These tests are considered stable and are not allowed to fail. -

review-qa-non-blocking: rest of the e2e tests that can be triggered manually.

review-qa-* jobs ensure that end-to-end tests for the changes in the merge request pass in a live environment. This shifts the identification of e2e failures from an environment

on the path to production to the merge request to prevent breaking features on GitLab.com or costly GitLab.com deployment blockers. If needed, review-qa-* failures should be

investigated with an SET (software engineer in test) counterpart to help determine the root cause of the error.

After the end-to-end test runs have finished, Allure reports are generated and published by

the e2e-test-report job. A comment with links to the reports is added to the merge request.

Errors can be found in the gitlab-review-apps Sentry project and filterable by review app URL or commit SHA.

Bypass failed review app deployment to merge a broken master fix

Maintainers can elect to use the process for merging during broken master if a customer-critical merge request is blocked by pipelines failing due to review app deployment failures.

Performance Metrics

On every Review App child pipeline in the qa stage, the

browser_performance job is automatically started: this job does basic

browser performance testing using a

Sitespeed.io Container.

Sample Data for review apps

Upon deployment of a review app, project data is created from the sample-gitlab-project template project. This aims to provide projects with prepopulated resources to facilitate manual and exploratory testing.

The sample projects will be created in the root user namespace and can be accessed from the personal projects list for that user.

How to

Redeploy review app from a clean slate

To reset review app and redeploy from a clean slate, do the following:

- Run

review-stopjob. - Re-deploy by running or retrying

review-deployjob.

Doing this will remove all existing data from a previously deployed review app.

Get access to the GCP review apps cluster

You need to open an access request (internal link)

for the gcp-review-apps-dev GCP group and role.

This grants you the following permissions for:

-

Retrieving pod logs. Granted by Viewer (

roles/viewer). -

Running a Rails console. Granted by Kubernetes Engine Developer (

roles/container.pods.exec).

Sign in to my review app

For GitLab Team Members only. If you want to sign in to the review app, review the GitLab handbook information for the shared 1Password account.

- The default username is

root. - The password can be found in the 1Password login item named

GitLab EE Review App.

Enable a feature flag for my review app

- Open your review app and sign in as documented above.

- Create a personal access token.

- Enable the feature flag using the Feature flag API.

Find my review app slug

- Open the

review-deployjob. - Look for

** Deploying review-*. - For instance for

** Deploying review-1234-abc-defg... **, your review app slug would bereview-1234-abc-defgin this case.

Run a Rails console

- Make sure you have access to the cluster and the

container.pods.execpermission first. -

Filter Workloads by your review app slug. For example,

review-qa-raise-e-12chm0. - Find and open the

toolboxDeployment. For example,review-qa-raise-e-12chm0-toolbox. - Select the Pod in the "Managed pods" section. For example,

review-qa-raise-e-12chm0-toolbox-d5455cc8-2lsvz. - Select the

KUBECTLdropdown list, thenExec->toolbox. - Replace

-c toolbox -- lswith-it -- gitlab-rails consolefrom the default command or- Run

kubectl exec --namespace review-qa-raise-e-12chm0 review-qa-raise-e-12chm0-toolbox-d5455cc8-2lsvz -it -- gitlab-rails consoleand- Replace

review-qa-raise-e-12chm0-toolbox-d5455cc8-2lsvzwith your Pod's name.

- Replace

- Run

Dig into a Pod's logs

- Make sure you have access to the cluster and the

container.pods.getLogspermission first. -

Filter Workloads by your review app slug. For example,

review-qa-raise-e-12chm0. - Find and open the

migrationsDeployment. For example,review-qa-raise-e-12chm0-migrations.1. - Select the Pod in the "Managed pods" section. For example,

review-qa-raise-e-12chm0-migrations.1-nqwtx. - Select

Container logs.

Alternatively, you could use the Logs Explorer which provides more utility to search logs. An example query for a pod name is as follows:

resource.labels.pod_name:"review-qa-raise-e-12chm0-migrations"How does it work?

CI/CD architecture diagram

graph TD

A["build-qa-image, compile-production-assets<br/>(canonical default refs only)"];

B1[start-review-app-pipeline];

B[review-build-cng];

C["review-deploy<br><br>Helm deploys the review app using the Cloud<br/>Native images built by the CNG-mirror pipeline.<br><br>Cloud Native images are deployed to the `review-apps`<br>Kubernetes (GKE) cluster, in the GCP `gitlab-review-apps` project."];

D[CNG-mirror];

E[review-qa-smoke, review-qa-blocking, review-qa-non-blocking<br><br>gitlab-qa runs the e2e tests against the review app.];

A --> B1

B1 --> B

B -.->|triggers a CNG-mirror pipeline| D

D -.->|depends on the multi-project pipeline| B

B --> C

C --> E

subgraph "1. gitlab-org/gitlab parent pipeline"

A

B1

end

subgraph "2. gitlab-org/gitlab child pipeline"

B

C

E

end

subgraph "CNG-mirror pipeline"

D>Cloud Native images are built];

endDetailed explanation

- On every pipeline during the

preparestage, thecompile-production-assetsjob is automatically started.- Once it's done, the

review-build-cngjob starts since theCNG-mirrorpipeline triggered in the following step depends on it.

- Once it's done, the

- Once

compile-production-assetsis done, thereview-build-cngjob triggers a pipeline in theCNG-mirrorproject.- The

review-build-cngjob automatically starts only if your MR includes CI or frontend changes. In other cases, the job is manual. - The

CNG-mirrorpipeline creates the Docker images of each component (for example,gitlab-rails-ee,gitlab-shell,gitalyetc.) based on the commit from the GitLab pipeline and stores them in its registry. - We use the

CNG-mirrorproject so that theCNG, (Cloud Native GitLab), project's registry is not overloaded with a lot of transient Docker images.

- The

- Once

review-build-cngis done, thereview-deployjob deploys the review app using the official GitLab Helm chart to thereview-appsKubernetes cluster on GCP.- The actual scripts used to deploy the review app can be found at

scripts/review_apps/review-apps.sh. - These scripts are basically

our official Auto DevOps scripts where the

default CNG images are overridden with the images built and stored in the

CNG-mirrorproject's registry. - Since we're using the official GitLab Helm chart, this means you get a dedicated environment for your branch that's very close to what it would look in production.

- Each review app is deployed to its own Kubernetes namespace. The namespace is based on the review app slug that is unique to each branch.

- The actual scripts used to deploy the review app can be found at

- Once the

review-deployjob succeeds, you should be able to use your review app thanks to the direct link to it from the MR widget. To log into the review app, see "Sign in to my review app?" below.

Additional notes:

- If the

review-deployjob keeps failing (and a manual retry didn't help), post a message in the#g_qe_engineering_productivitychannel and/or create a~"Engineering Productivity"~"dx::review apps"~"type::bug"issue with a link to your merge request. The deployment failure can reveal an actual problem introduced in your merge request (that is, this isn't necessarily a transient failure)! - If the

review-qa-smokejob keeps failing (we already retry them once), check the job's logs: you could discover an actual problem introduced in your merge request. You can also download the artifacts to see screenshots of the page at the time the failures occurred. If you don't find the cause of the failure or if it seems unrelated to your change, post a message in the#test-platformchannel and/or create a ~Quality ~"type::bug" issue with a link to your merge request. - The manual

review-stopcan be used to stop a review app manually, and is also started by GitLab once a merge request's branch is deleted after being merged. - The Kubernetes cluster is connected to the

gitlabprojects using the GitLab Kubernetes integration. This basically allows to have a link to the review app directly from the merge request widget.

Auto-stopping of review apps

Review apps are automatically stopped 2 days after the last deployment thanks to the Environment auto-stop feature.

If you need your review app to stay up for a longer time, you can

pin its environment or retry the

review-deploy job to update the "latest deployed at" time.

The review-cleanup job that automatically runs in scheduled

pipelines stops stale review apps after 5 days,

deletes their environment after 6 days, and cleans up any dangling Helm releases

and Kubernetes resources after 7 days.

Cluster configuration

The cluster is configured via Terraform in the engineering-productivity-infrastructure project.

Node pool image type must be Container-Optimized OS (cos), not Container-Optimized OS with Containerd (cos_containerd),

due to this known issue on the Kubernetes executor for GitLab Runner

Helm

The Helm version used is defined in the

registry.gitlab.com/gitlab-org/gitlab-build-images:gitlab-helm3.5-kubectl1.17 image

used by the review-deploy and review-stop jobs.

Diagnosing unhealthy review app releases

If review app stability

dips this may be a signal that the review-apps cluster is unhealthy.

Leading indicators may be health check failures leading to restarts or majority failure for review app deployments.

The review apps Overview dashboard aids in identifying load spikes on the cluster, and if nodes are problematic or the entire cluster is trending towards unhealthy.

See the review apps page of the Engineering Productivity Runbook for troubleshooting review app releases.

Frequently Asked Questions

Isn't it too much to trigger CNG image builds on every test run? This creates thousands of unused Docker images.

We have to start somewhere and improve later. Also, we're using the CNG-mirror project to store these Docker images so that we can just wipe out the registry at some point, and use a new fresh, empty one.

How do we secure this from abuse? Apps are open to the world so we need to find a way to limit it to only us.

This isn't enabled for forks.

Other resources

Helpful command-line tools

- K9s - enables CLI dashboard across pods and enabling filtering by labels

- Stern - enables cross pod log tailing based on label/field selectors